Electromagnetism Secretly Runs the World

A Co-Written Essay with Arena Physica CEO Pratap Ranade

Welcome to the 520 newly Not Boring people who have joined us since our last essay! Join 260,690 smart, curious folks by subscribing here:

Hi friends 👋 ,

Happy Tuesday! Welcome to our newest installment in what has become an unintentional two-part series on non-LLM models that can do things that humans can’t, things that will give us superhuman abilities in the physical world. They’re also both co-written with founders you’d expect to find in SF but are building right here in the greatest city in the world, NYC.

The first was last week’s essay on World Models with Pim de Witte.

Today’s is about machines that can intuit electromagnetic fields in a way almost no humans can that will help us design and build better electromagnetic (EM) systems.

As you know, I’m very bullish on the growing role of electromagnetic systems in the economy. After Sam and I wrote The Electric Slide, Arena Physica CEO Pratap Ranade and I traded emails. In one of them, he wrote:

The electrical and electromagnetic components are the “nervous system” of modern hardware and contribute to 40-50% of failures. Our ability as a nation to test and build it has declined, but –– imo even bigger –– as a species, we’re still unable to wield electromagnetism to its full potential.

Over the past seven months, we’ve developed a friendship, and Pratap has broken my brain many times. One of the things that’s most fascinated me is the idea he’s betting his company on, the one he emailed me about: humans can’t intuit EM, and it’s a bottleneck to the electric progress we both want to see. There’s no reason machines can’t be taught to understand them much better than we can, though.

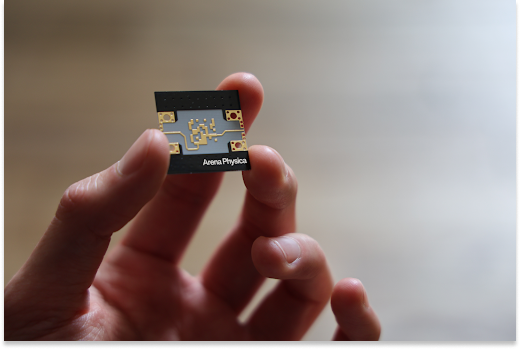

For the past few years, Arena has been building AI tools and deploying expert electrical and RF engineers to help companies design, develop, and debug electromagnetic hardware. They’re working with companies including AMD, Anduril, and Sivers Semiconductors. They are backed by investors including Founders Fund, Peter Thiel, Initialized (Garry Tan), Shield Capital, and 137 Ventures.

Today, they’re re-branding as Arena Physica with the expanded mission to develop “Electromagnetic Superintelligence.”

This is an essay about how to teach machines to see the fields that we can’t, and what the world might look like if we can.

Let’s get to it.

Today’s Not Boring is brought to you by… The Pitch by Deel

Normally, our sponsors would like me to tell you why you should give them your hard-earned money. This time, Deel has asked me to tell you how they can give YOU money in The Pitch.

Deel recently launched a global tournament with $15M in prizes for startups: the top 10 get a $1M investment each, and 100 regional winners will get $50k. You don’t need warm intros and you don’t need to pay to apply. It’s just a pure competition for the best entrepreneurs.

Pitch in just two minutes for the chance to win a $1M investment, access to a global ecosystem designed to help you scale (partners and sponsors include Stripe, Google, AWS, and a16z), and to get your startup in front of global leaders. This is your shot.

Electromagnetism Secretly Runs the World

Electromagnetism secretly runs our world. “Secretly,” because only a few people on this planet can intuit how it works.

Your phone’s GPS is powered by satellites that broadcast electromagnetic (EM) waves with timestamps. The wi-fi in your apartment is created by EM waves bouncing around the walls. Air traffic control is radar, as in EM waves that pulse out and listen for echoes off aircraft. When Maverick locks onto a bogey in Top Gun, he’s using a phased array radar steering EM beams electronically. Contactless payment? EM. Microwave oven? EM. The fiber optic cables carrying the internet across the ocean floor and through Somos’ network? That’s light… which is also EM.

Every single wireless signal, medical image, radar sweep, every chip talking to another chip inside a data center. All of it is electromagnetic waves, shaped and directed by physical structures designed to manipulate these waves. And, as electricity and intelligence race to define our era, EM’s presence is only growing more pronounced. In our data centers, chips communicate with each other via short-range EM waves. If Elon successfully moves the data centers to space, AI will be beamed down from satellites to your device via EM waves.

As Packy and Sam wrote in The Electric Slide, everything that can economically go electric will. Cars, trucks, buses, drones, boats, stoves, heat pumps, batteries, bikes, even planes, anything that moves, heats, lights, computes, or converts energy is moving from mechanical to electric. All of those newly electric things will be full of EM components.

In 1970, electronics accounted for five percent of a new car’s cost, on average. By 2020, that number reached forty percent. By 2030, it’s anticipated that the cost of the electronics of a consumer automotive vehicle will reach fifty percent of the vehicle cost.

Electronics comprise 35% of the cost of the F35 Lightning II, more than the cost of the engine itself, and 15% of the Pratt & Whitney F135 engine, which costs $20 million. By the 2030s, when it’s projected that defense contractors will be building the F-47, they’ll be spending over 40% of the $300 million airframe on electronics.

This is good. We want to see this electrification continue. Electric machines perform better with less impact on the environment, give us capabilities combustion engines can’t, are better-suited for autonomy, and are riding cost/performance curves that will continue to widen the advantage.

But among a number of challenges addressed in The Electric Slide particularly as it relates to production, there’s an equally large one looming in the research and development of new and better electromagnetic machines: our electromagnetic capabilities are bottlenecked by the very small number of humans who actually understand how any of this works.

There is a reason radiofrequency (RF) engineering — the practice of designing hardware that shapes and directs EM waves — is often referred to as black magic. There are maybe ten people in the world who can deeply intuit electromagnetism, who can see which shapes will create which EM fields in their mind’s eye1. I am not one of those people, but I’ve met them. I’m hiring them at my company, Arena Physica, and I went to school with many of them.

There was a guy in my physics program about whom our professor asked, “You know what’s special about this guy?” We all said no. “This guy thinks like an electron.”

What he meant was that, electrons, if they were sentient, would feel all of these different fields pulling at them. Electrons would probably have an intuition for this feeling, the same way we have an intuitive feel for gravity, how we just know that when we let go of a ball, it will drop to the ground. Those friends of our ancestors’ who couldn’t intuit gravity did not live long enough to reproduce.

Some people – a vanishingly small number – have spent enough time studying, testing, designing, and simulating electromagnetic systems to be able to intuit them like gravity. But for the rest of us, electromagnetism is mostly invisible.

For the vast majority of human history, we haven’t needed to see beyond the visible spectrum in order to survive. And so we haven’t. Those of our ancestors’ friends who wasted precious resources on seeing the full spectrum of EM waves wouldn’t have lived to pass on these traits, either.

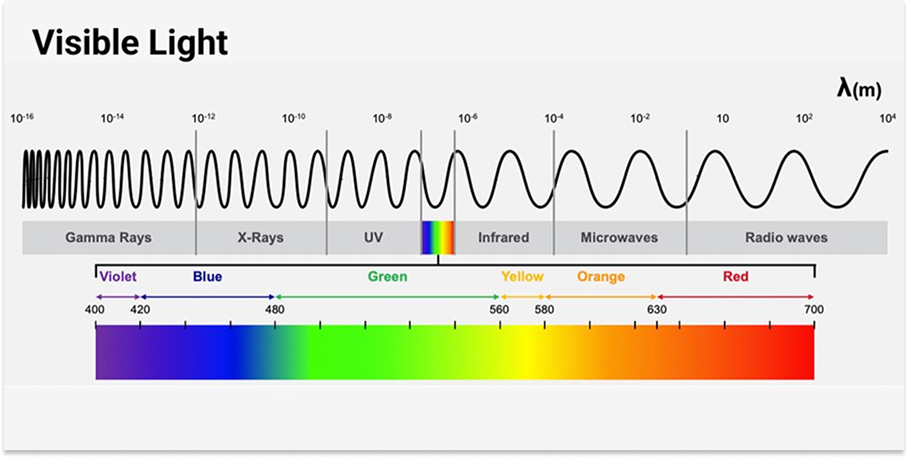

Humans can see a small part of the electromagnetic spectrum, the “visible light” portion with wavelengths between 400 nanometers (violet) and 700 nanometers (red). We don’t see shorter wavelengths (ultraviolet light, X-rays, or gamma rays) or longer wavelengths (infrared, microwave, or radio waves).

This has served us just fine. Until electromagnetism came to run the world.

We have a fundamental force that we rely deeply on, but one that very few of us can work with naturally. This slows technological progress and limits what we can make.

Fortunately, AI doesn’t share our blind spots. It is particularly good at seeing patterns, at making connections and understanding dependencies that are not necessarily intuitive to humans.

Because of this, we believe that computers will be much better at grasping electromagnetism than we are. We should be able to build a Large Field Model (LFM) — like an LLM that generalizes across language, except ours generalizes across EM. We should be able to use this LFM to understand EM waves and shape them to do what we’d like them to do.

That’s the big bet we’re making at Arena Physica. To understand why we’re making it, I want to first make sure you understand electromagnetism.

A Brief Primer on Electromagnetism

Packy and Sam gave A Brief History of Electromagnetism in The Electric Slide.

I’m going to add to that with A Brief Primer on Electromagnetism. I’ll sprinkle in relevant history, but my goal is to make sure we have a working understanding of electromagnetism.

There are four fundamental forces that govern how everything in our universe works:

Strong force

Weak force

Gravity

Electromagnetism

The strong force and weak force operate at subatomic scales. The strong force binds protons and neutrons together in atomic nuclei. The weak force enables radioactive decay and nuclear fusion.

Gravity is the weakest of the four forces by an enormous margin (roughly 1036 times weaker than electromagnetism). Yet, it dominates at cosmic scales. It only attracts, never repels, which means its forces keep adding up. And it acts on every single particle with mass or energy. It’s also mysterious at a fundamental level: gravity and quantum theory are incredibly powerful theories for how our world works, but they are fundamentally incompatible. This remains one of the deepest unsolved problems in physics. But we have a strong, intuitive relationship with gravity. In our day-to-day lives, we can feel the force.

Electromagnetism is the force we interact with most directly in everyday life. It’s also the one we’ve industrialized most aggressively. It governs light, electricity, magnetism, and chemistry, essentially everything about how matter behaves above the nuclear scale. It’s why matter has structure, why chemistry works, and why technology works. It’s responsible for the structure of atoms (electrons bound to nuclei), the bonds between molecules, the rigidity of solid objects, and all electronics and communication technology. Unlike gravity, electromagnetism has both positive and negative charges, which means it can attract or repel, and large accumulations tend to neutralize themselves. Our mathematical understanding of electromagnetism is extremely accurate: it is described by quantum electrodynamics (QED), the most precisely tested theory in all of science.

And yet… despite this precision, electromagnetic systems can be deeply counterintuitive. RF engineering, for instance, has a reputation as black magic. The wave-like, distributed nature of fields at certain frequencies produces effects that violate the intuitions built from simple circuit theory.

But let’s try to build up our intuition as best we can.

Every force carries energy. Electromagnetic energy comes in quanta we call photons, particles of light, but for most of what we build — antennas, radars, communication systems, phased arrays — it is easier to think about it as waves, in terms of frequency, wavelength, and phase. A photon has different amounts of energy based on its frequency, which can be seen on the electromagnetic spectrum. A super-high energy photon would be something like an ASML machine making chips using EUV (extreme ultraviolet). EUV is very, very high frequency. Therefore, it is very high energy, and in turn, very short wavelength. As you go through the visible spectrum and get to the other side, you reach infrared, and electromagnetic energy becomes heat. Then you get to RF (radiofrequency). With RF you have very low-energy photons. But it’s really just all electromagnetic waves that follow the same principle. High energy = high frequency = short wavelength and vice versa. (Explore the EM spectrum here.)

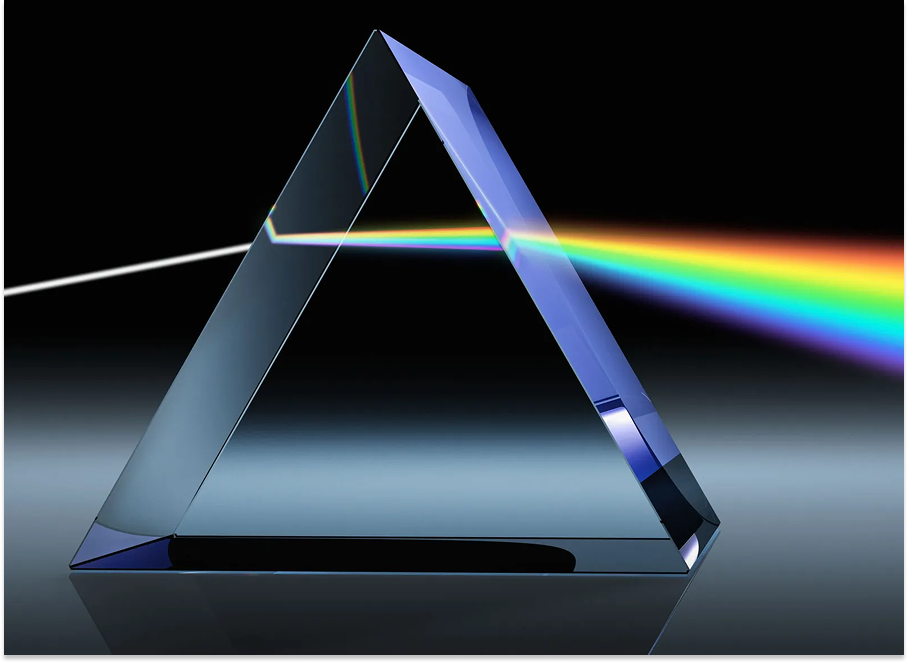

Now, think about a prism. A prism is an object or a material that treats incident EM waves — or different photons — differently. So if you’re a red photon, your refractive index, or how much you bend, is a certain number. If you’re a blue photon, it’s different. Refract the whole beam and you end up with that beautiful rainbow on the other side of the prism.

The prism is an early example of humans manipulating electromagnetic fields. People noticed that light, when it passed through glass or a crystal shaped in a certain way, would form a rainbow. We’ve come up with many ways to manipulate electromagnetic fields since, the most consequential of which is also the simplest.

If a caveman discovered he could manipulate electrons, the very first thing he might do is the simplest possible thing: electron on, electron off. On, off. One, zero.

The prism is actually much more sophisticated than the on, off switch. It’s more subtle, more expressive, and its implications are more powerful. But computing is based on the caveman’s idea of flipping the switch.

Early computers were literally built on this simple idea: they used mechanical switches known as relays to compute. When the metal touches, current flows (1/on). When it separates, current stops (0/off).

One problem with mechanical switches was that, when you switch often in air, the air ionizes and creates tiny bolts of lightning that can jump across the gap and create an arc. These arcs can make your “bit” unreliable. Sometimes it’s supposed to switch, and it doesn’t.

The other problem was that they are very slow. It was the advent of the electronic switch that put us on a path to be able to now build processors that can work at Ghz speeds (109 cycles per second).

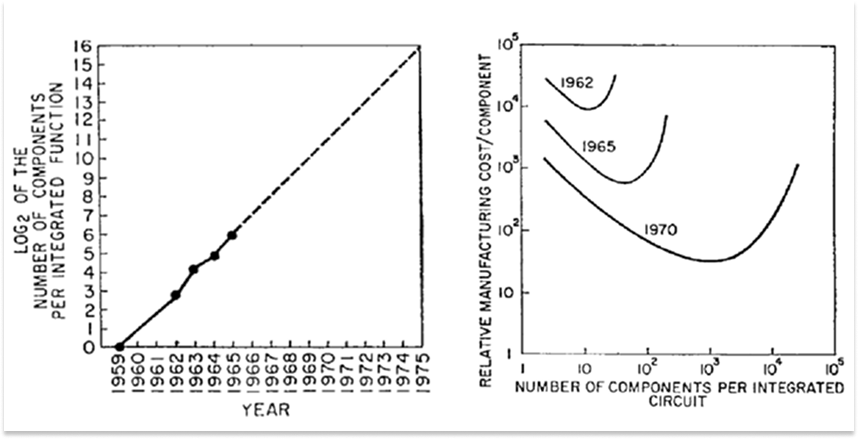

So we invented vacuum tubes2. They removed the air. Without atmosphere, there was no arcing. But vacuum tubes were fragile, power-hungry, and couldn’t scale. The great breakthrough was the semiconductor, materials like silicon that can have their conduction controlled. They can be made to conduct or not conduct (hence semi-conductor) based on applied voltage. Semiconductors enabled the transition from mechanical to digital, and it gave us the transistor, which are tiny silicon devices that switch on or off using voltage. This gave us Moore’s Law, which gave us Boolean logic, which gave us everything in modern computing. That singular innovation, the transistor, has produced most of our technological advancement over the past seventy years.

But if you read Gordon Moore’s 1965 paper, the one in which he described what would come to be known as Moore’s Law, you’ll find that only the first half is about digital silicon; the second half is about analog silicon.

Nobody paid attention to the analog part, but I think it’s even more fascinating today than the digital one.

Digital silicon is about switching: transistor on or off, conducting or not, one or zero. All the gates, all the logic, all of computation follows from that binary foundation. It’s powerful, but it’s also, as we’ve discussed, the simplest possible thing you can do with an electron. It’s caveman math.

Analog silicon is about shaping. Instead of just on/off, you’re asking: what if I could bend the electromagnetic wave? What if I could guide it, direct it, absorb it at specific frequencies and reflect it at others? In practice, this is RF front-ends, antennas, packages, and printed circuit boards (PCBs) behaving like a single, unified electromagnetic object.

This is how the world works too. The world is analog. The world does not work in 0s and 1s, but rather in the continuum in between them. Even if all computation is done digitally, you’ll need to deal with analog signals and shape waves the moment you need to interact with the real world (for example, capture sound in a microphone, produce sound in a speaker, send wireless signals over the air, send light on optical fibre)

Remember the prism? That’s what analog silicon does, but for all electromagnetic frequencies, not just visible light. Instead of glass bending light, you can use carefully shaped conductors printed on silicon to bend, direct, and shape EM waves.

This is where we leave the realm of deterministic computing and enter a world of black magic.

Try This At Home

Here’s an experiment. You can try this at home.

Materials List: COPPER WIRE, COMPASS, BATTERY.

Take your copper wire, connect it to a battery, and run current straight through it. The magnetic field it produces will wrap around the wire in a helix. You can confirm it’s doing this by holding a compass near it and watching the needle deflect perpendicular to the wire.

Now, coil that same wire into a spring shape (a solenoid) by wrapping it around a pencil or screw 10-15 times. Run current through it. The magnetic field is completely different: instead of wrapping around the wire, it shoots straight through the center of the coil. Same wire, same current. But a different shape = radically different field.

This is the fundamental game of electromagnetism: geometry determines behavior. Every antenna, radar, or phased array tile is just a more sophisticated version of this principle. Find the right shape, and you can make electromagnetic fields do almost anything.

To understand why shapes matter so much, consider what happens when an electromagnetic wave hits a conductor.

A conductor is special because it has free electrons. Free electrons are not locked into a lattice like in an insulator, but instead swim around in what physicists call an “electron sea.” When a photon (an EM wave) hits this electron sea, those electrons start to move in response. They oscillate with the wave.

This is fundamentally how an antenna works. The old bent TV antenna on your grandparent’s roof was shaped specifically to receive UHF frequencies broadcast from a distant TV station. The EM waves traveling through the atmosphere would hit the antenna, excite the electrons in the metal, and those oscillating electrons would travel down the wire into your TV as a signal.

That signal carried information, like encoded images of I Love Lucy, compressed into patterns of electromagnetic oscillation, broadcasted through the air, absorbed by your antenna, decoded by your TV. If you step back and think about it, this entire chain is completely absurd. We transmit moving pictures through the air using invisible waves. And turning those waves back into pictures all comes down to the shape of a wire.

Radar works basically the same way, except it’s more high-powered and moves in reverse.

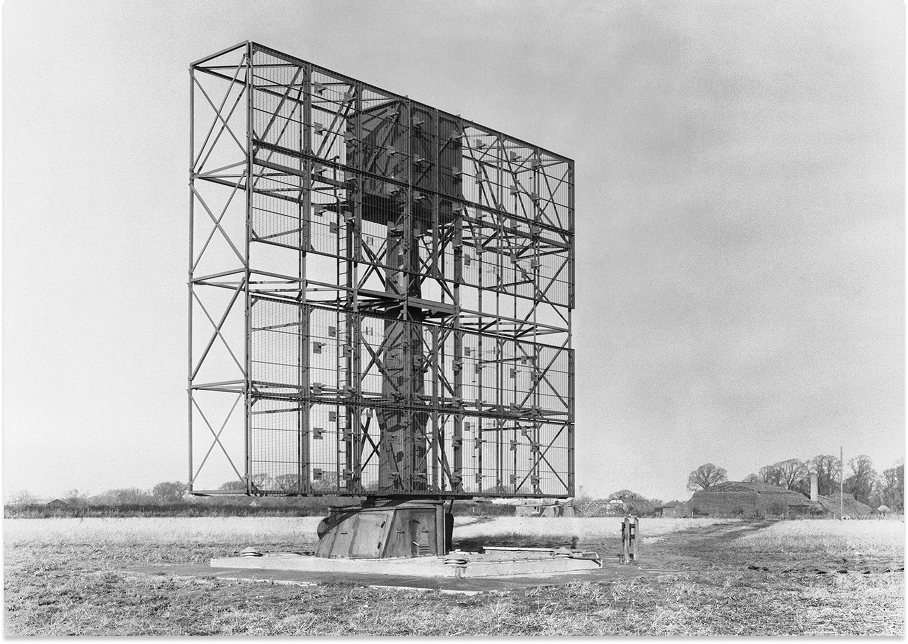

World War II accelerated radar. It also showed us how badly we needed it. The Allies were being pummeled and needed to track incoming threats. They turned to radar, which had developed quickly thanks to the war. In the late 19th and early 20th centuries, Heinrich Hertz (of Hz fame) showed that radio waves could reflect off objects. Several physicists also noticed that radio signals behaved strangely when ships or other objects were nearby. Through the 1920s and early 1930s, scientists in the U.S., U.K., Germany, France, the Soviet Union, Italy, and Japan all experimented with using radio echoes to detect objects.

In 1935, a Brit named Robert Watson-Watt (no relation to the steam engine Watts) proposed and then demonstrated a practical aircraft-detection system using pulsed radio waves. This led to the Chain Home early-warning network along the English coast. Chain Home was operational at the start of WWII and gave the Royal Air Force so much of an early warning in the Battle of Britain that it’s often credited as a key factor in preventing a German invasion. The United States picked up development a bit later, with the benefit of British tech transfer, and scaled up the technology’s capabilities and manufacturing. In the U.S., Alfred Loomis led research efforts at Tuxedo Park3 and helped establish MIT’s Rad Lab, which developed fire-control radar, airborne radar, and navigation radar. Germany built parallel systems that pushed the state-of-the-art in different directions.

Radar, instead of receiving a broadcast signal, transmits a beam on multiple wavelengths, waits for it to reflect off something (like a bomber), and then listens for the echo. If the object is big enough and close enough, you can detect it.

But to scan the sky, you need to point your beam in different directions. In the 1940s, that meant literally spinning a large dish antenna. You needed mechanical motors to do it. A mechanical gimbal rotating a giant antenna.

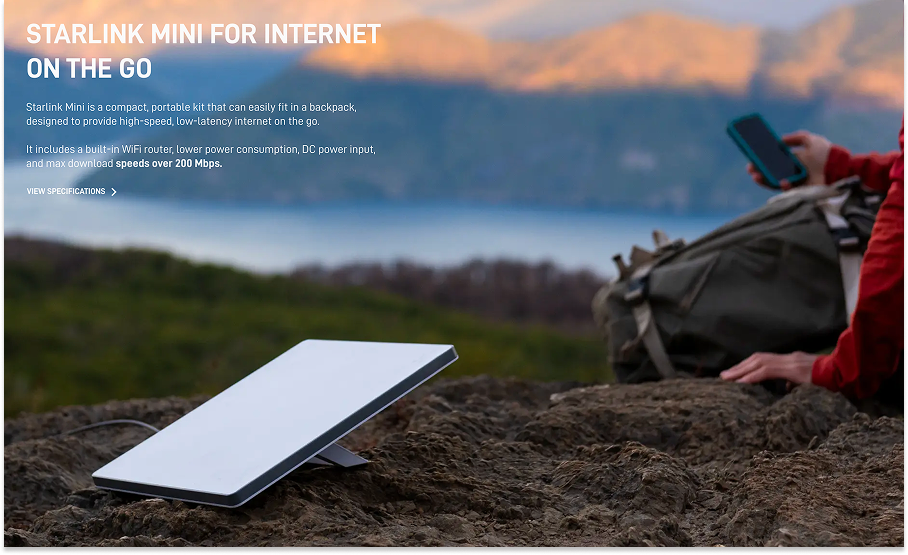

This worked, but it had obvious limitations. Moving parts break, for one. For another, the dish can only spin so fast. Today, Starlink satellites need to update their pointing multiple times a second, since they are moving at 7.6km/second. Try doing that mechanically and for 5,000 simultaneous beams.

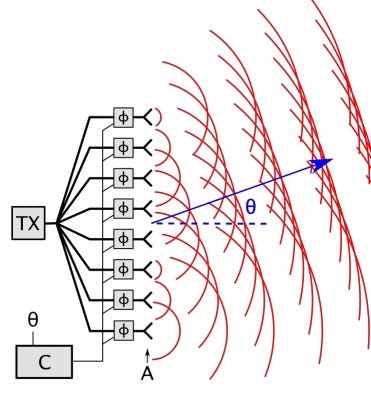

This is where the second half of Moore’s 1965 paper becomes relevant. Moore realized you could use transistors to solve the spinning-dish problem. Replace the mechanical movement with electronic steering.

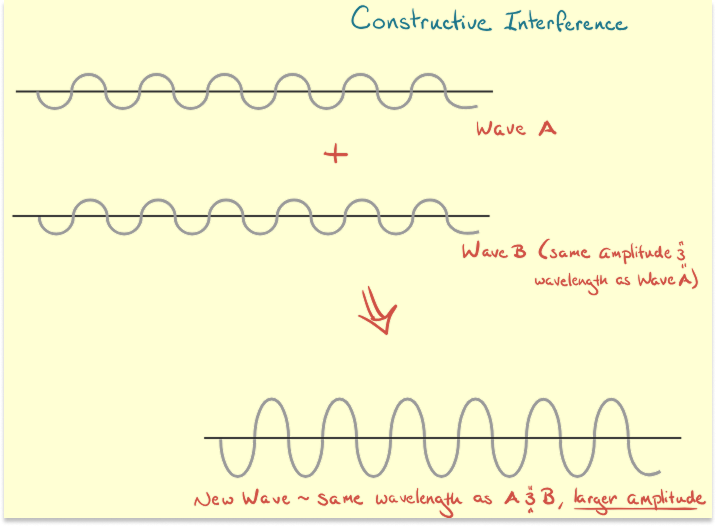

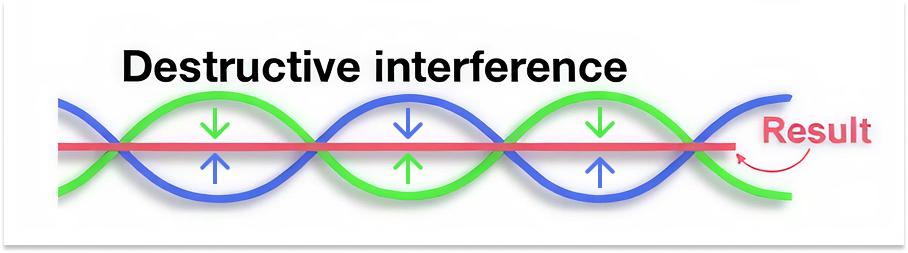

The key insight is constructive and destructive interference, the same phenomenon you see when ripples on a pond meet and either amplify or cancel each other.

Imagine you have a grid of small antenna tiles instead of a single big dish, like a checkerboard where each square is a tiny antenna. Each tile can emit a signal. Each tile is the whole RF front-end and antenna structures across chip/package/PCB that acts like a single, holistic EM object. Now, if you start the signal from the leftmost tile first, then the next tile a tiny bit later, then the next, and so on and so forth, the wave fronts from each tile will interfere with each other. Get the timing right and they’ll constructively interfere in one specific direction, creating the effect of a single, focused beam pointing in the direction you want.

Change the timing pattern, and the beam will point somewhere else. You can replace moving parts with analog and digital logic that controls when each tile fires.

This is called a phased array. And it’s how modern radar works. If you want to develop a better intuition by playing with it, we built a little simulator here.

The radar on an F-35 is called an AESA (Active Electronically Scanned Array). Nothing on it moves. It’s just a grid of semiconductor tiles, and the “beam” sweeps across the sky purely through timing. It’s also how Starlink works. Each Starlink terminal has 1,280 of these beam-forming silicon tiles. That’s why you can buy a flat panel for $300 that does what used to require a million-dollar spinning dish.

What’s happening on those tiles?

Remember: digital silicon is about transistors switching on and off. But the tiles in a phased array shape electromagnetic fields through their physical geometry.

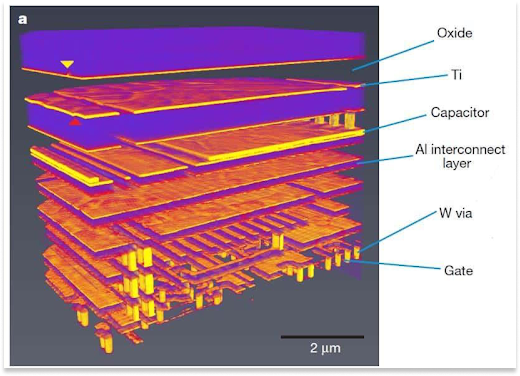

Think back to the TV antenna, a bent wire specifically shaped to receive certain frequencies. Imagine you could print that shape onto a silicon chip, layer by layer, laying down copper traces in specific geometries, creating structures that interact with EM waves in precise ways.

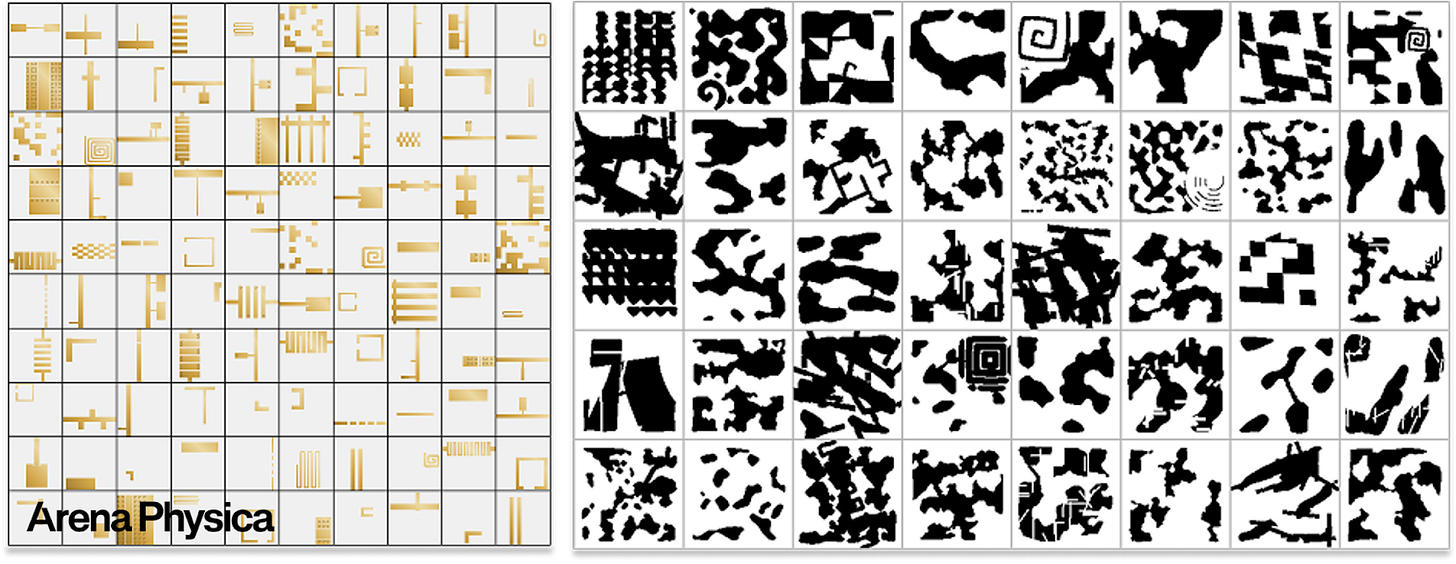

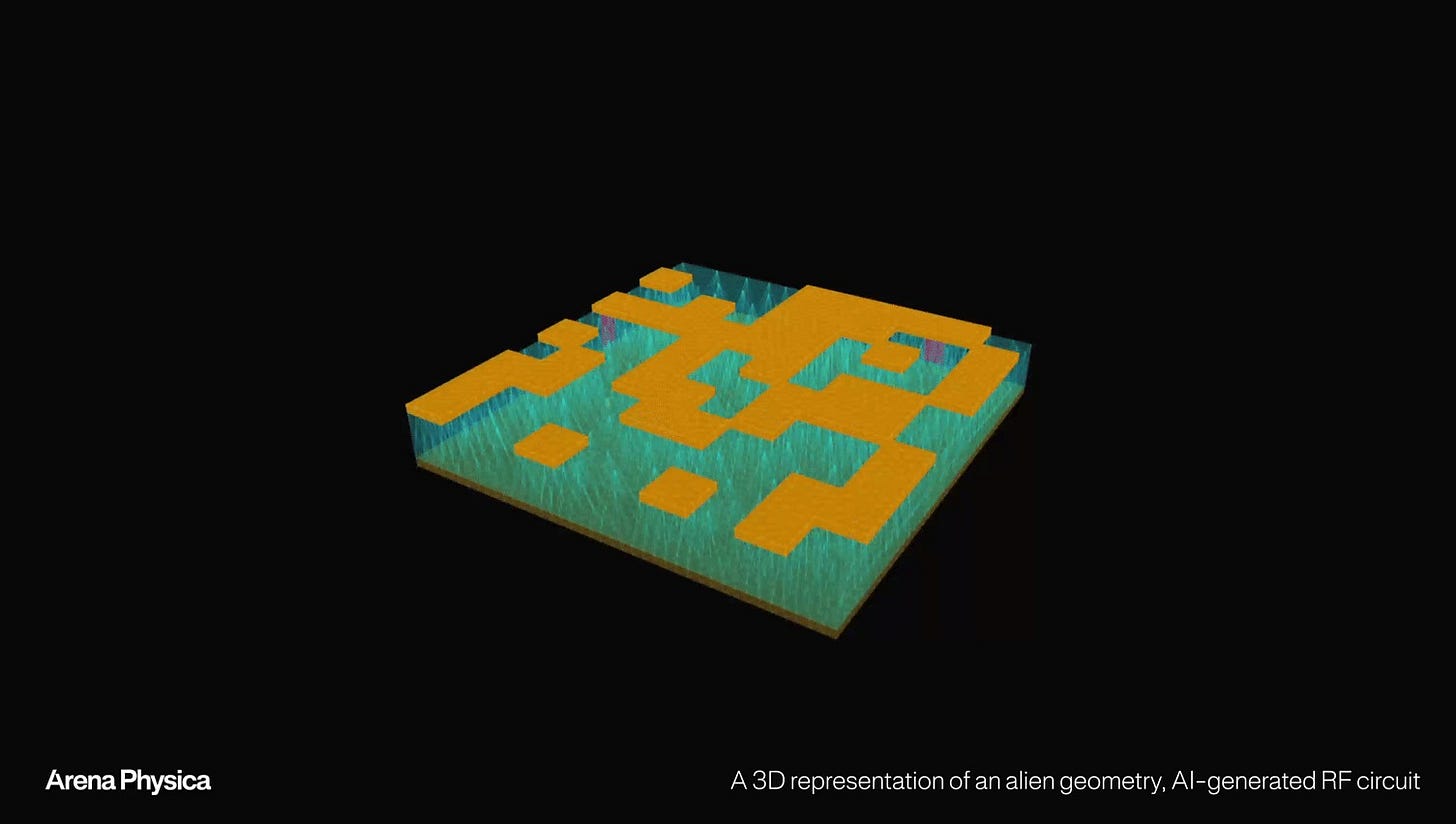

On one layer, you might have a spiral. On the next, a grid. On the next, something that looks like a QR code. Stack them up with tiny connections between layers called vias, and you’ve created a three-dimensional structure that can emit, receive, absorb, and reflect electromagnetic waves at specific frequencies and in specific directions, which you have full control over.

This is what a phased array tile actually is: a 3D sculpture of copper and silicon, designed so that electrons create exactly the EM field you want when electrons flow through it.

Why This is So Hard to Build

Creating an EM field of your liking is all about geometry, which means it’s all about shapes. But how do you know which shapes to make?

With digital silicon, the rules are relatively simple. Transistors are either on or off. You can simulate billions of them with perfect accuracy. The design problem is about routing and timing, but the physics is well-behaved.

Analog silicon is different. The physics is wave physics, and waves do things that violate our intuitions.

At optical (high) frequencies, we often get away with “ray optics” intuition; light mostly travels in straight-ish lines, reflecting and refracting, and you can treat its components as fairly local.

At RF, the wavelength is big enough that your whole device becomes part of the circuit. Fields couple into enclosures, PCBs, screws, nearby tiles… Everything talks to everything. That’s why RF feels like black magic, and why you have to simulate the whole object to predict how it’ll perform.

When you’re designing a Starlink tile, for example, you can’t just model the tile in isolation. The EM waves emanating from it will interact with the entire Starlink unit: the metal casing, the other tiles, the mounting bracket, the support structure. You have to simulate the whole system at once.

This is why there are no automated tools for analog circuit design. Digital circuits can be “synthesized” from code; a digital designer can write “RTL” code that describes how his digital circuit works. Then, there are tools that can read the code, and “compile” it to a chip. But no such tools exist for analog design. There are no “standard cells” for analog, no standard analog designs, no standard building blocks. Everything interacts with everything.

Which is why there’s no ARM for analog silicon4. There are no companies that can sell “IP,” standardized circuit designs, to a multitude of customers in a highly profitable fashion — no such standard circuit exists. Every new system is different, and as a result, each new customer has different needs.

ARM can design a chip that works in any phone because digital chips are self-contained. But an analog phased array tile designed for a Starlink terminal won’t work in a different satellite. The interference patterns will be completely different!

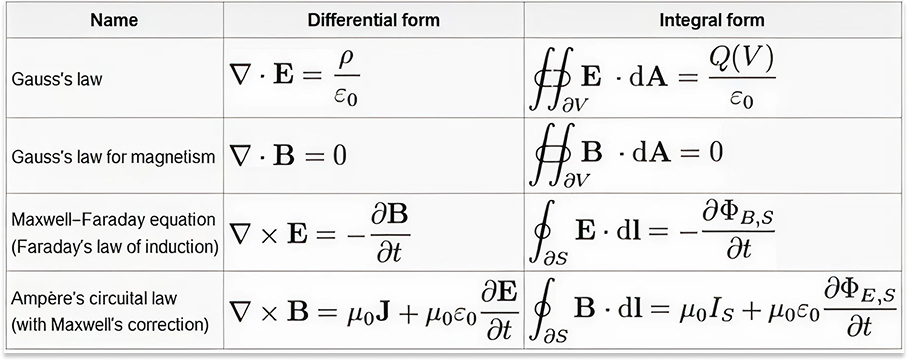

And the simulation tools are slow. The equations governing electromagnetic fields are called Maxwell’s equations, four partial differential equations that are notoriously difficult to solve.

They’re just equations. What’s the problem?

If an EM wave is at a higher frequency, it’s more “particle-like”— intuitively, it’s like a ball — you know where it is, and it bounces off stuff cleanly. If the ball is in one corner, it doesn’t affect anything in the other corner. As EM wavelengths get longer (into RF), they become more wave-like, and the particle is sort of “spread out.” The waves start to interfere with each other a lot, like ripples on a pond. They can either strengthen or cancel each other out.

So, if you’re NVIDIA and selling high frequency chips in boxes, you can sell a single product. You can design one GPU and sell it to everyone. There is no difference between the Navy putting your chip on a ship, or Sony putting it in a Playstation. They can all just buy the chip and plug it in. But, for example, if you’re buying components for a phased array system, you have to model the entire system, because it’s type 2, not type 1. A design for a Navy ship won’t work in a Starlink terminal. The EM fields interact with everything around them—the metal casing, the mounting structure, nearby components. Change the environment, and you need a completely new design. Everything becomes a custom services problem that is rate limited by these rare experts and simulation.

In short, the solvers are slow, even with supercomputers or programs like Ansys, because the equations are tough to solve and require expertise to wield. The reason the equations are very hard is boundary conditions (the edges, where smooth calculus breaks down), e.g., a sharp metal edge can cause problems by creating strong electromagnetic reflections that cause fields to concentrate in unexpected ways.

Running a full simulation of a proposed design can take hours or days. Here’s an example design loop: make your best guess at a shape, wait hours for simulation, discover it doesn’t quite work, adjust the shape, wait hours again. As we collaborate with field experts, we witness each simulation iteration with legacy tools taking a week. That’s not enough ‘shots on goal’ to develop Electromagnetic Superintelligence.

RF design can’t be done by brute-force computation. The search space is infinite and each evaluation takes too long.

Take a simple two-layer circuit where each pixel in a 64×64 grid can be either metal or dielectric. That’s already roughly 264x64, or 101,233, possible configurations for a single, small component. The entire history of human RF design has explored a vanishingly small fraction of that space, obviously. Here, see how many of those configurations you can come up with.

Navigating this search space requires intuition. You need someone who can look at the desired field pattern and just... sense what shape might produce it.

The people who can do this have spent decades building up a feel for how electrons move through structures, how fields bend around corners, how waves interfere. They can sketch a spiral on a whiteboard and tell you roughly what frequencies it will emit strongly and which it will absorb. Aside from my classmate who could see like an electron, though, this intuition isn’t natural even to those special few. They acquire it painstakingly over long careers. Because, unlike gravity, there was never evolutionary pressure to understand electromagnetic fields outside of the visible spectrum. We don’t feel them. They’re invisible to us.

I watch my baby daughter learning about the world. She already has an intuition for mechanics. She knows that if you roll a glass off a table, it will break. She has no intuition for electromagnetism, which is probably genetic. 99.99% of people don’t.

It is a miracle that we’ve been able to manipulate EM waves to our purposes to the extent that we have. But the world is only getting more electromagnetic, and we will need a lot more shapes.

That means we need to build something that does have intuition for electromagnetism.

AlphaGo for Electromagnetism

In 2016, DeepMind’s AlphaGo defeated Lee Sedol, one of the greatest Go players in history.

The moment that stuck with everyone was in Game 2, Move 37.

The expert commentary went something like this: “That’s a mistake.” Then: “That’s stupid.” Then: “That’s a very strange move.” And finally: “That’s beautiful. That’s elegant.”

AlphaGo had done something no human would have tried, a move so unconventional that the world’s best players initially dismissed it as an error. But it worked. The machine had discovered a strategy that humans, despite thousands of years of playing Go, had never found.

What made AlphaGo possible? Two things. First, Go has clear rules and a perfect simulator. You always know exactly what state the board is in and which moves are legal. Second, because of these limitations, a computer can play millions of games against itself very quickly. AlphaGo learned by playing more games of Go than all humans in history combined.

We want to do what AlphaGo did for Go — but for physics. What if we could build a system that played millions of “games” of electromagnetic design and developed an intuition that humans simply can’t acquire?

There was an obvious obstacle between us and that dream. AlphaGo worked because Go is a perfect simulation. You know exactly what happens when you place a stone on the board. But physics is more complicated, and the simulators are slow. Maxwell’s equations take hours to solve. You can’t “play a million games” overnight.

So we needed to build the simulator first.

The EM foundation model that we’ve built, and continue to scale, is the simulator for EM physics.

The starting point for what we’ve built is known as a neural surrogate. The idea is simple: instead of solving Maxwell’s equations from scratch every time (which is slow), you train a neural network to approximate the solutions (which is fast). It’s like the difference between calculating the sine of an angle by hand versus looking it up in a table, except the “table” is a neural network that can interpolate to angles you’ve never seen before.

Traditional physics simulators work by brute force. They break space into tiny chunks, apply the equations at each point, and iterate until the solution converges. It’s accurate, but a single simulation can take hours.

But what we’re building goes beyond a surrogate. Most surrogates in physics are narrow: trained to approximate one specific simulator for one specific class of problems. Arena Physica’s model learns the relationship between shapes and fields directly, allowing it to generalize. It’s not a faster calculator (it’s not a calculator at all). The neural surrogate is learning the syntax of physics. Just as GPT learned the “logic” of language, our model is learning the “logic” of fields. Show it enough examples of “this shape produces this field pattern,” and it learns to predict new patterns for new shapes almost instantly. We’re talking about 18,000x speedups, hours to milliseconds.

If you’re reading closely, you might be thinking to yourself: of course you can go faster if you’re just trying to get an approximate answer and not a perfect solution.

Good catch. This is where the magic happens.

When you’re searching for good designs, speed and direction matter more than precision.

Think about how an experienced RF engineer actually works. They use their intuition to filter out ideas that probably won’t work and to get the rough shape of ideas that might. Then, they simulate those. They make fast, approximate judgments to decide where to invest their slow, precise simulation time.

Arena Physica’s model does the same filtering, just much faster. It doesn’t need to tell you exactly how well a shape will perform. It needs to tell you how each shape will perform relative to the others. Good enough for search is a much lower bar than good enough for publication.

Speed lets us flip the problem around. Instead of asking “what field does this shape produce?” we can ask “what shape produces this field?” That’s generative design. We specify what we want, say, an antenna that transmits strongly at 28 GHz but rejects interference at neighboring frequencies. The system uses our desired state to generate shapes that might achieve our goals.

Then, we pair two models in a loop: one that generates designs, and one that evaluates them5.

The generator proposes a batch of shapes, many of them wild, strange, things that no human would come up with. Move 37 shapes. The evaluator characterizes them all in seconds, directionally: this one’s terrible, that one’s promising, this one’s interesting. The best candidates get refined via small variations and perturbations. The evaluator evaluates the refinements. Repeat.

At each step, we ask, “Is this one better for my goal than the shape we had before?” Because we know what the rules are, like AlphaGo, and what our goals are, like AlphaGo, we can reward the model for getting closer. And like AlphaGo, by making simulation cheap, we can explore much more of the design space than we could have if everything we wanted to try required a precise multi-hour simulation.

In Asymmetry of verification and verifier’s rule, OpenAI’s Jason Wei describes the “verifier’s law.” Essentially, it says that any task that’s easy and fast to verify will be automated by AI. The hard part about our world is verification relies on special humans and simulators that are slow and expensive. By attacking this first via the foundation model for fields, acting as a fast simulator, we’ve made this problem accessible by generative AI for the first time. Our generator learns the weights through our feedback loop.

This is the same loop that made AlphaGo work: generate, evaluate, learn, repeat.

Run the loop for yourself here. You get a visceral sense for the importance of speed.

Of course, this loop only works if you have enough training data to feed it, and unlike LLMs, which can scrape the internet for training data, EM field simulations don’t exist in the wild. Nearly every single data point has to be created. So we’re building our own Data Factory.

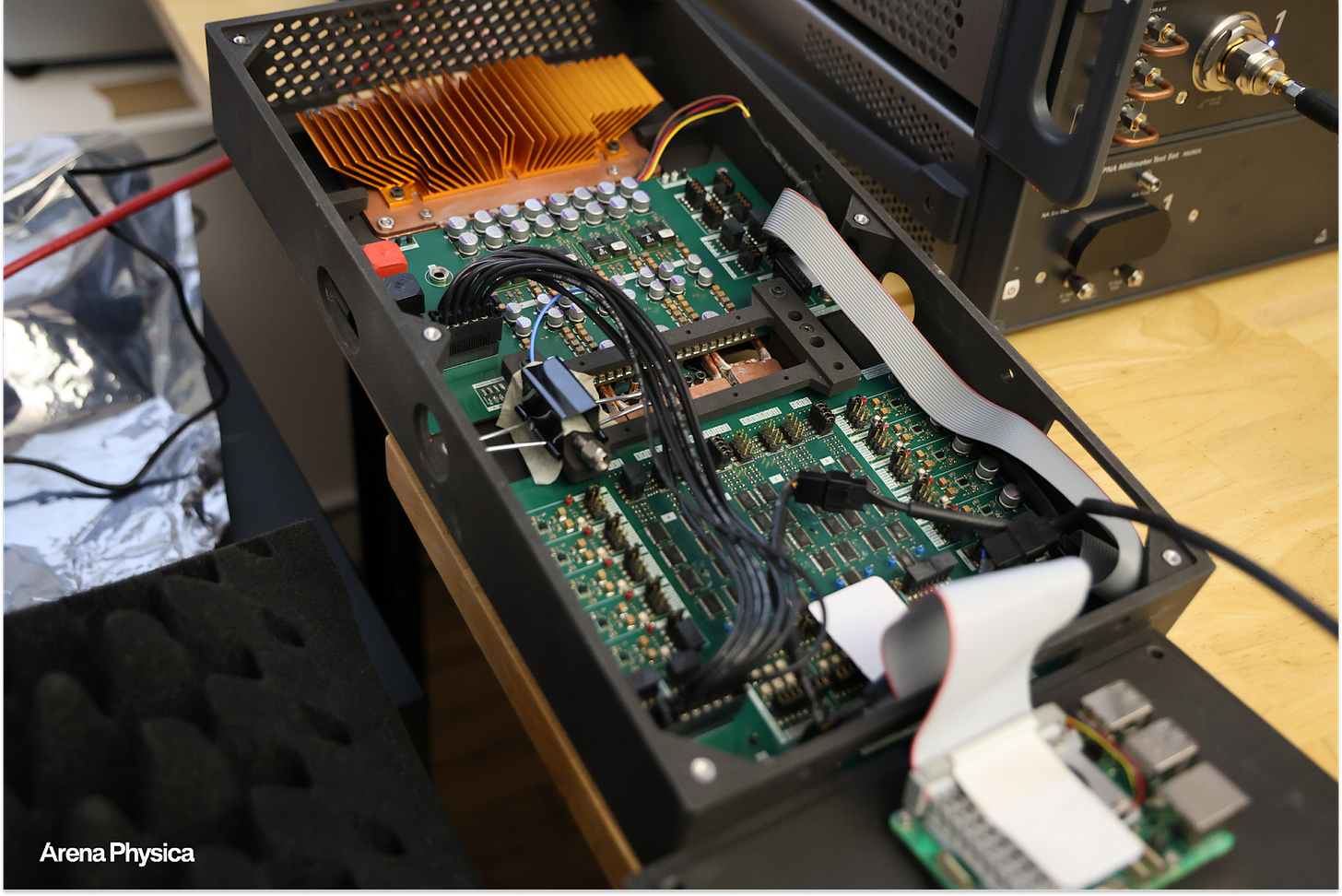

To do that, we’re hiring the best RF lead designers we can find. Put them in one place, where, theoretically, we can amortize the capabilities of this scarce group of people over all sorts of existing and newly possible customers. Have them create designs, give feedback, test their designs, run through the loop.

We generate random designs synthetically, our experts create seed designs that our system can then procedurally amplify, and we fabricate the top candidates and pipe real-world measurements back into training.

The Data Factory has three layers: high-volume synthetic data, high-information expert-seeded data, and ground-truth fabrication data.

Because of how manual the Data Factory is today, we need to pick our use cases strategically. We are starting with analog silicon (chip packaging, phased array components, RF front-ends) and the full phased array system we mentioned above. We’ll be expanding into new domains, like superconducting quantum computing, in conversation with our partners.

The factory is the moat. You can’t build a foundation model for EM without it, nobody else that we know of is building one, and to try, you’d have to hire from the very small pool of experts that we’re bringing together at Arena Physica.

Then, when we’ve converged on something promising, by feeding the Data Factory’s output into our loop, we validate it by running the slow, precise traditional solver on our best candidate. Or better yet, by fabricating the design and validating it in the real world.

Remember why there’s no ARM for analog? At RF frequencies, the wavelengths are long enough that EM waves interact with everything around them. When designing a phased array for a Starlink terminal, you can’t just model the chip; you have to model the chip, the circuit board, the metal casing, the mounting structure, everything. It all affects how the EM waves behave.

Because of that, we’re even using our own components to build an entire phased array system for imaging and detection from scratch.

We’ll tape out silicon before the end of the year. And, for any part of the problem that’s not analog, we’re actually using our agentic stack — our hardware-aware agents, operating on our metagraph – a dynamic graph representation of the hardware – talking to tools via MCP — to speed up every single aspect of the process, so that we can go end-to-end faster. In this way, we benefit from all the amazing leaps coming from the foundation models, but also own something they can’t replicate: an EM foundation model, fed by our own Data Factory.

The system compounds: fast approximate evaluation enables broad search, broad search finds promising candidates, fabrication validates and generates training data, training data improves the generator, better generator enables even broader search.

If you can do all of the RF design work in our loop, you can build an analog IP Factory.

The IP Factory for RF and the Compiler for Atoms

Our EM Foundation model’s key advantage over existing surrogates is that it can generalize.

Take a philosophical leap with me.

LLMs don’t mechanically learn to classify a sentence. They train on the structure of words and sentences in relation to each other. The rest of their behavior is emergent.

Before LLMs, you had spam detection as its own major problem. You had summarization as its own problem. Translation was its own separate problem. There were actually good companies with good ML teams doing each. The thing that they all got wrong was focusing on narrow problems. What we’ve learned is that if you can understand language at the root level, and you see scaling laws, you can get all the downstream applications for free.

So if it is true that there’s a fundamental relationship between geometry and EM fields, just like there is in language, and if scaling laws are true, then this model should generalize.

EM simulation looks a lot like language pre-LLMs. Where Ansys simulators fail – e.g., this tool is for antenna simulation, this tool is for EMI simulation for an automotive engine – we could tackle all of that with one model, like an LLM tackles translation, sentiment analysis, spam detection, and so much more all in one.

We believe that LLMs were the first foundation models, not the last. Language is one primitive of intelligence – the one humans use to communicate and think. But the universe has other primitives. Newton created calculus because the language of the universe is not English. LLMs will let us interface with new foundation models that can push humanity’s understanding of the universe farther, giving us intuition for things our biology wasn’t evolved for. Such models are a critical part of a positive-sum future of AI.

This is the huge bet that we are making at Arena Physica. It’s our thesis. We are already building a strong business that helps companies better understand and iterate on their electromagnetic systems, but we think generalization will also allow us to build the IP Factory for RF.

With our loop, we can automate IP creation and design. This is the key distinction between our model and ARM’s. If generalization works, then instead of selling one design to many customers like ARM does, we can generate a unique design for each customer and each use case almost instantly, with roughly the same effort it takes ARM to create one-size-fits-all IP.

And we think, and are seeing early signs, that our model can scale this automation across the whole electromagnetic spectrum, in which case our TAM is anything with a wave.

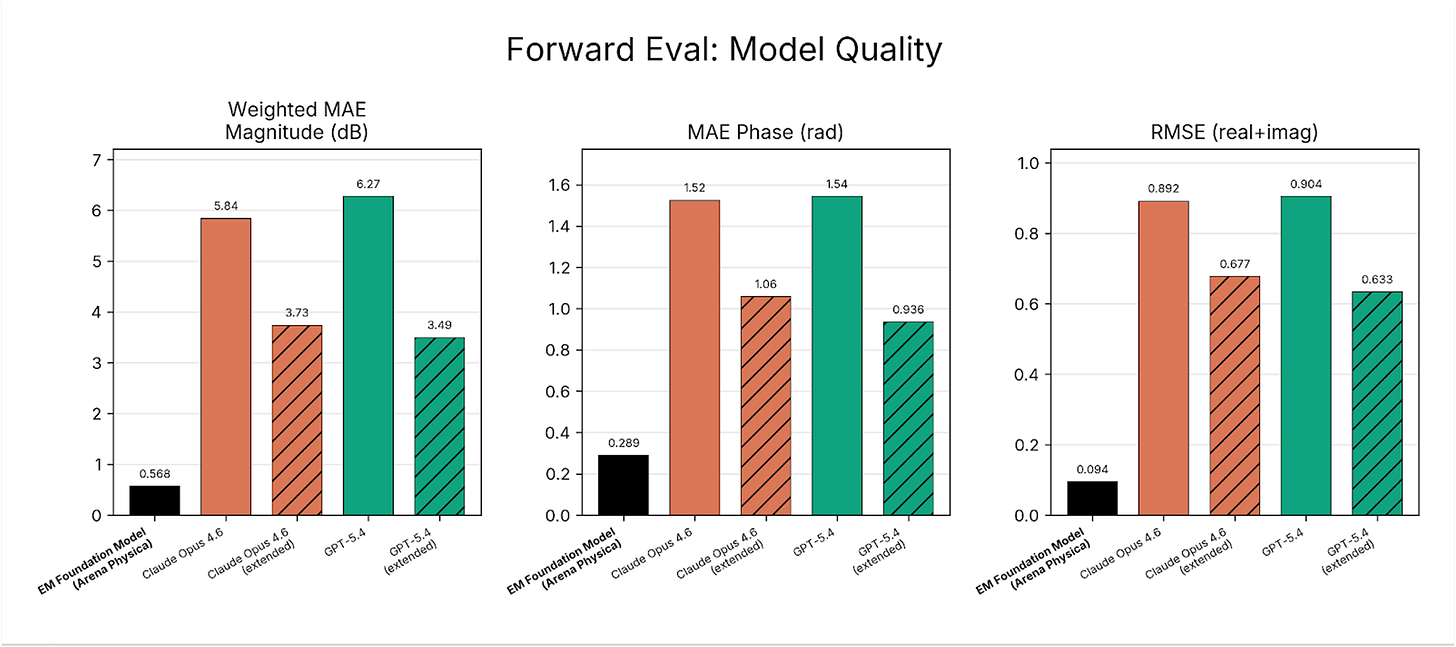

One question we get often is: can’t LLMs just do this? We’ve been running our own internal tests against frontier LLMs – both regular and extended thinking - and their performance gap to our base model is substantial. Our model achieves a magnitude weighted-MAE (Mean Absolute Error) well under 1 dB (for context, the range that RF engineers typically care about spans roughly 20-30 dB, so <1dB is a very strong result)6.

What we’re building is different than LLMs, and better at what it was built to do. A useful way to think about what this unlocks is as a “Compiler for Atoms.”

In software, compilers translate high-level programming languages into binary instruction sets that a CPU can execute. We went from assembly to C++ to Python, and now Claude Code is arguably a compiler with English as the programming language. The LLM compiles down to the programming language it decides is best, which then compiles down further to machine code. At each step in this progression, the abstraction gets higher and the number of people who can “program” gets larger.

Physics doesn’t have a compiler yet. The universe has an instruction set: materials and geometries arranged in specific configurations. Place them one way, you get a motor. Place them another, you get an invisibility cloak. We know all of this is possible because the equations tell us so. Human physicists have spent centuries learning this instruction set. But to access any of it, you still need to hire the equivalent of an assembly language programmer: a physicist who has spent decades learning to translate between human intent and the physical world’s instruction set.

What we’re building at Arena Physica is, in some sense, a Compiler for Atoms: a way to express what you want in high-level terms and have it compiled down into the geometries and materials that produce it, starting with Maxwell’s equations and eventually, we hope, adding Schrödinger’s.

When we launch our EM foundation model next week, we’ll make it available to interact with via an agentic UI. You’ll be able to type a request in plain English like, “I need an eight gigahertz band pass filter for a satellite uplink.” The LLM translates that target scattering parameters, the technical parameters we’re optimizing for. Our LFM generates candidate geometries using physics as its reasoning substrate rather than language. Then, the LLM engages again to explain what the model did and why, drawing on the foundational RF engineering knowledge we’ve built into the system.

Andrej Karpathy says LLMs are “people spirits.” In our system, the spirit of David Pozar, author of the bible of RF engineering, provides his best explanation of the geometries generated by our EM foundation model. It’s like working with an intern who happens to speak electron, except the intern is channeling decades of accumulated RF wisdom.

I think this will be a powerful paradigm for the future. Everyone is thinking about human-to-model interactions. But the bulk of the work in systems like ours is model-to-model: the LLM talking to the EM foundation model, the EM foundation model responding, the two iterating through design space at machine speed. The human-to-model layer becomes a minority of the interactions, the intent-setting and interpretation. The real work happens in a language we can’t speak, translated for us by models that can.

In the future, language models might serve as the universal interface between humans and an entire ecosystem of specialized foundation models: for EM fields, for biology, for materials science. In this future, the LLM becomes the diplomat between species of intelligence.

Eventually, we want to get to a world in which anyone can say, “I want an invisibility cloak” or “I want a cheaper Starlink,” and the machine will design it. But today, even with our compiler, we’re still in the C++ era. Expert “physics coders” still need to tell the machine, “I need an 8 gigahertz band pass filter for a satellite uplink.”

In the meantime, we want to deliver that “eventually” future today.

Taking Problems Off Customers’ Plates Entirely

Since it became clear that our model had the potential to scale and generalize, I’ve been thinking a lot about the right way to deliver the capabilities it provides to customers.

I’ve thought about whether we should build the ARM for Analog. I’ve thought about selling access to the model, or the IP Factory, directly. I don’t think either are quite right. I think it’s worth talking through where we landed and how, because what constitutes the right business model is changing a lot with AI, and the model we landed on is probably not the one we would have pursued a few years ago.

If you think through what makes Arena Physica unique, it’s really four things: we have a talent-dense collection of some of the world’s best RF engineers, a “Compiler for Physics” that can generate valuable IP in experts’ hands, a Data Factory that improves as those RF engineers generate and validate more IP, and a software platform (complete with FDEs) that makes it easy to apply LLM-based agents to reason about hardware.

The business model that falls out of that is services. We’ve hired some of the world’s best RF designers and a team of excellent electrical engineers. Each one, working with our EM foundational model, with our agents, and with LLMs, can cover enormous ground. So instead of selling people the tools and asking them to figure it out on their own, we’re starting to just take problems off people’s plates.

You want to launch a space company and you have an unsolved problem with how your racks communicate in orbit? We’ll solve that for you. You need a silicon layout for your next chip? We’ll do the layout. We send in RF and electrical engineers, armed with our tools, and deliver the product the customer needs. It’s full-stack electromagnetic engineering as a service, at a speed and cost that wasn’t possible before, because each of these rare experts can now do what used to require an entire team, faster, better, and cheaper.

And because we’re so early in our journey to build the EM foundation model, working directly with our customers lets us learn faster and improve our models based on real-world needs.

To that end, we’re also pursuing research partnerships. This falls out of our model, too. As we scale the LFM from version one to version two and beyond, we need to decide which training data to generate next, and that depends on the problems we’re solving. Partners working on chip packaging need us to model different structures than partners working on superconducting quantum computing. They get early access to our model and our team for their specific problems, and we get the data we need to drive generalization. The research partnerships feed the Data Factory, the Data Factory feeds the model, and we eat more of the EM spectrum.

One counterintuitive move here is that we don’t plan to sell the model. We plan to publish it, and sell everything around it: the platform, the experts, and solutions to our customers’ problems. The model is what makes all of that possible, but it’s not the product. As Packy wrote in Power in the Age of Intelligence, “If your technology is so good, why aren’t you using it to compete?” Our product is: bring us your electromagnetic problem, and we’ll solve it.

Companies shouldn’t be bottlenecked by the fact that, as it stands, you need hundreds of millions of dollars to build the types of systems we can build. We should be able to almost AWSify expertise for them.

There’s a lot more I want to do here.

As our model improves, and we move from the C++ to Python and even Claude era of EM foundation models, I suspect our business model changes, too. We can sell the “designer” to companies and they can use it to generate their own IP. A sort of Golden Analog Silicon Goose. As that happens, the cost to manipulate the EM spectrum goes down, and humanity’s capabilities increase.

Over the longer term, my dream is to run Arena Physica as a modern Bell Labs, to use the commercial side of the business to fund a new kind of research network.

What if, once we’ve proven the foundation model works, we opened it up? We could give academics free access to our model and our compute. In exchange, when they use it to design novel structures or discover new phenomena, the IP flows back through Arena. We take a cut (maybe 20%, like an app store) and they keep the rest.

Right now, a professor working on some exotic antenna geometry has to write grants, wait for funding, hire grad students, and slowly iterate through simulations on whatever compute they can scrounge. What if instead they could just... use the model? Explore design spaces that would take years to search manually? And get paid when their discoveries become commercially valuable?

If our model can auto-generate IP for any electromagnetic application, we become the platform. The rare humans who can push the boundaries become contributors, and get rewarded for it as their rare knowledge turns scalable. Because the universe of people with this expertise is so small, we can actually get our arms around sharing the upside of their work with them.

Of course, as we expand across the EM spectrum, and our models become multiphysics models, we’re not going to hire all of the world’s great physicists. But by opening up the platform and becoming a new kind of Bell Labs, we can work with them.

I want Arena to be a place where academics can sabbatical in and access our models. Where we’re not just selling software, but funding experiments. Where we create incentive structures that let brilliant people do fundamental research without the soul-crushing grant cycle. Where we compress the cycle between physics research and application, and wield AI not to do what humans can do cheaper, but to do things humans can’t do at all today. If we win, humanity benefits.

But I’m getting ahead of myself. First, we need to take problems off of our customers’ plates.

What Can Our Customers Build?

So what could our customers build if we provide them best-in-class RF and EE?

To start, by working with Arena Physica, any company that wants to do anything with phased arrays can get custom ones, and much more quickly than they could have before.

What they’ll be able to build isn’t limited to what you’d traditionally think of as radar.

I heard a phrase once that stuck with me. I’ve always thought of radar as a detection system, but this guy told me that radar is actually an imaging system. As the frequency gets shorter, you get higher resolution. You can image things. So radar doesn’t just tell you that “something is there.” It also tells you what that something looks like. Radar can create images, like a camera.

Take drones. Everyone is talking about drones as the future of warfare, but currently, we can’t see them with radars or sensors. It might surprise you to learn that the United States Navy doesn’t have counter-drone phased array radar at scale. That surprised me too, so when we dug a little bit, what we heard was that in the current system, it’s just too expensive. To get Raytheon to build a new ship-based phased array radar for them would end up costing as much as half the ship.

It should not cost close to a billion dollars to make phased array radars. But remember that slow process of imagining and simulating designs that we discussed earlier? Now, imagine that happening inside of a slow-moving legacy prime that gets paid a margin on top of every dollar it spends. The result is that our Navy doesn’t have phased array radars that are good at detecting drones.

We can help the Navy see drones much more cheaply. And we should. For drone detection, you might have 1,000 incoming targets. The benefit of phased arrays is that you can form multiple beams from one antenna, like Starlink does, instead of something spinning like an old-timey radar or LiDAR. (As a side-note, those spinning LiDARs on top of Waymos will almost certainly become phased arrays, too, and when they do, the whole unit goes solid-state, which is cheaper and more reliable with no moving parts).

We also have people reaching out to us who want to design phased arrays for drone capture. They’re building systems that catch drones in mid-air with a robot arm. The drone capture systems are smart; they have onboard computing and sensors to actively track and intercept the incoming drone. They need to be able to see, which means they need custom phased arrays that can track a fast-moving drone with high precision, work in any visual conditions, and are small and cheap enough to put on the capture mechanism itself. They certainly couldn’t make the math work at a billion dollars, but we can bring those costs down at least an order of magnitude in the near-term with automated design and fast iteration.

The applications only multiply once you realize that you’re dealing with an all-condition imaging system, and that drones are just one application of phased arrays. The same physics that steers radar beams also steers communication beams.

If space stuff continues to grow the way everyone thinks it will, you’re talking about tens of thousands of satellites and millions of ground terminals. Every single one needs these precisely shaped silicon tiles. Every ground station needs phased arrays. Northwood just raised a ton of money to build phased array ground stations. Every Starlink antenna on a house and satellite is powered by phased arrays.

Now, let’s say an adversary is trying to jam your radar signal. Notice how these little things dive down to zero:

Remember I said you can dynamically move this beam? This is one of the benefits of the phased array. It lets you move those points. Those are called null points, and it’s where the interference pattern zeroes out, like your noise canceling headphones. So imagine I’m being jammed. What’s crazy cool about a phase array is that I can transmit my signal, and then I can cyclically move my null point to absorb the jam signal. Just think about it. It’s remarkable, actually. You’re still transmitting and receiving, but you’ve carved out a little pocket of silence right where the enemy is screaming at you.

But if you can exploit the physics really well—like, in this case, we’re still using our good old digital transistors to say what to do, while the analog shapes determine the quality of the EM fields produced—this is where Gordon Moore’s dream might come true: his silicon boolean transistors are talking to analog silicon. And that’s really cool. With digital silicon, we can compute. But with analog silicon, we can transmit power, we can transmit directed energy, we can absorb energy. Analog silicon makes it much more physical.

The same physics that lets you communicate also lets you deny communication to others. And the same physics that lets you transmit also lets you absorb. Stealth is just the inverse problem of radar: instead of bouncing signals back, you’re making them disappear.

It’s all shapes.

Imagine we could change the economic structure of all of this.

That is exactly what we are trying to do at Arena Physica. Our mission is to create “Electromagnetic Superintelligence.” It sounds audacious, but remember, it is much easier to achieve superintelligence—as in, relative to humans—in electromagnetism than it is in language or even math. And it describes precisely what we’re building: a system that develops superhuman intuition for how geometry shapes electromagnetic fields, a mind that can see what we can’t.

As a software engineer, I don’t know how to be an infrastructure engineer. That’s because I don’t need to. Amazon takes care of it for me. If you could say companies no longer need RF expertise, you could lower the cost of everything we’ve discussed dramatically by a factor of 10 or more. We could give RF capabilities to everyone, from small companies to those that serve the Navy.

One obvious ramification is we’ll probably see more satellite companies and space companies, because they can now design their own phased arrays. More competition in the radar space. More competition in the jamming space.

Another less obvious one might be backpack radars. Think about troops going into a situation like Ukraine. One of the big risks they face is drones sneaking up on them. They should have backpack-mounted counter-drone radar: small, cheap phased arrays that let every warfighter see what’s coming.

Backpack radars are a very specific thing, but the point is that they’re something that made no practical or economic sense before that becomes practically and economically feasible.

We can even help AI models get better in a sort of indirect way.

Data centers need to move enormous amounts of data between chips very fast. The problem is that, at those speeds, the wires connecting chips start acting like antennas. They can accidentally broadcast and pick up signals. Which means they hit bandwidth limits on chip-to-chip communication. So their goal is the opposite as ours: they’re trying to make really bad antennas. They don’t want the electron traveling between their GPU and CPU to pick up a signal or transmit one, because then the data gets garbled. This is called signal integrity. The solution is the same shaped-silicon approach: carefully designed structures that guide high-frequency signals without interference.

It’s not just chip-to-chip. Think bigger. A data center company recently asked us whether we could beam data rack-to-rack wirelessly, because the cabling itself is becoming a bottleneck to how fast they can deploy. It’s not just terrestrial, either. For orbital data centers, there won’t be any option. You’re not going to be running optical cables between racks in space.

The most interesting thing is how the market has responded by asking us for use cases we would never have thought of.

For example, high-frequency trading firms have reached out to us about helping them trade faster. I’d assumed fiber optics already transmitted at the speed of light, but due to total internal reflection inside the glass, signals travel at only 60-70% of light speed. A phased array transmitting through free space goes at actual light speed. Over the distance between New York and Chicago, that difference could be enough to make a lot of money.

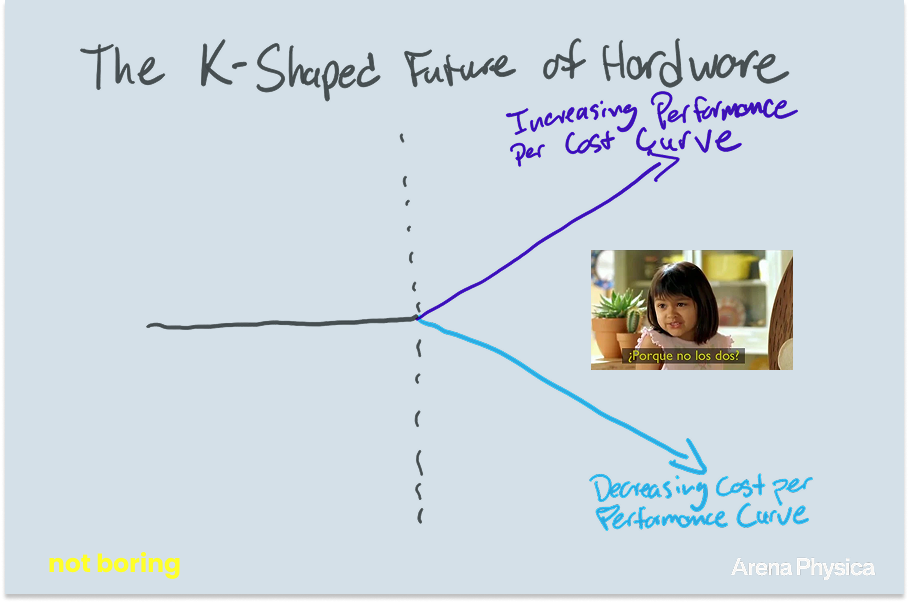

If you look at what we’ve just described, there are really two different things happening. I think we‘re going to see a K-shaped future for hardware.

The lower leg of the K is making commodity devices dramatically cheaper and more accessible. For example: the Navy acquiring drone-detection radar without writing a billion-dollar check to Raytheon, satellite startups designing their own phased arrays instead of outsourcing to a prime, backpack-mounted counter-drone radar for every warfighter, and data centers deploying faster with wireless rack-to-rack links are capabilities that exist today in expensive, bottlenecked forms. We want to remove these bottlenecks and make the capabilities cheaper and more abundant.

The upper leg of the K is making exquisite devices at the frontier of what’s physically possible newly achievable. This is what Bell Labs enabled, entirely new and more powerful capabilities than were possible before their research. There is a lot of excitement about cheap, attritable systems in the future of warfare, and rightly so. But in the conversations I’ve had with military leadership, they believe that to win (or deter) a conflict in the Indo Pacific, we’re going to need some of the world’s most exquisite machinery. The F-117 was crucial to winning the Gulf War; it made up just 2.5% of coalition air power but destroyed 40% of all strategic targets. We want to make new types of exquisite hardware possible, for defense and beyond.

By making it easier, faster, and cheaper to design more capable RF components, we think we’ll help expand the market beyond current analyst estimates, which don’t anticipate the unimagined. Those estimates project a 12% CAGR for RF components, from $44.8 billion to $140.5 billion over the next decade. I think that’s wrong, almost certainly too low. One of the reasons they’re projecting relatively slow growth is that RF is just too hard today. But if you democratize the expertise and transform the cost structure, would everyone just add better all-weather sensing equipment onto their robot? Would everyone just add backpack-mounted radars to every soldier?

What I’m most excited about is this open possibility space. I don’t even know what else people might think up now that they have the ability to manipulate this stuff. If we’re right, their ambitions won’t be limited by speed, economics, or even by the shapes required to deliver the capabilities that they need.

Alien Designs

A lot of the shapes coming out of our system already look nothing like what a human would design. Basically, we’re working with near-alien humans to create a system that will ultimately produce alien designs.

Human RF engineers have been trained on certain canonical structures: dipoles, patches, spirals, horns. They know these shapes work because they’ve been refined over decades. When they design something new, they start from these familiar forms and tweak them.

Our system doesn’t care about any of that. It starts from noise and evolves toward function. The results often look like QR codes, or random stippling, or structures that seem to follow no logic at all.

When we show these designs to expert RF engineers, their first reaction is usually skepticism. “That doesn’t look like an antenna.” “I would never have come up with that.” “Are you sure this works?”

Then, we fabricate it.

Today, we’re fabricating at the PCB board level. The goal I have for the team is that we’ll do our first silicon tapeout this year. As in, we’ll manufacture actual silicon chips. Analog silicon has the advantage that we don’t need TSMC’s bleeding-edge fabs; older, cheaper factories like Samsung, Global Foundries, and some defense fabs can do it, because the node size is typically larger.

And it works. This is the AlphaGo moment. Remember Move 37 expert commentary?

We’re seeing the same pattern. Engineers look at our designs and say, “That’s super unconventional. That’s unusual. That’s not in the textbook.” And then it works. And then they say it was “creative.”

Our goal is to do more than just match human performance autonomously. We want to exceed it and find all of the EM equivalents of Move 37: designs so counterintuitive that no human would have tried them, but so effective that they outperform anything we would have tried.

My hunch is that this will happen fairly quickly in EM because of what we discussed at the top: most humans didn’t evolve to intuit which shapes produce which EM waves. Therefore, we are terrible at intuiting the answer. On the other hand, computers can become superhuman — we can evolve them to get there in simulation.

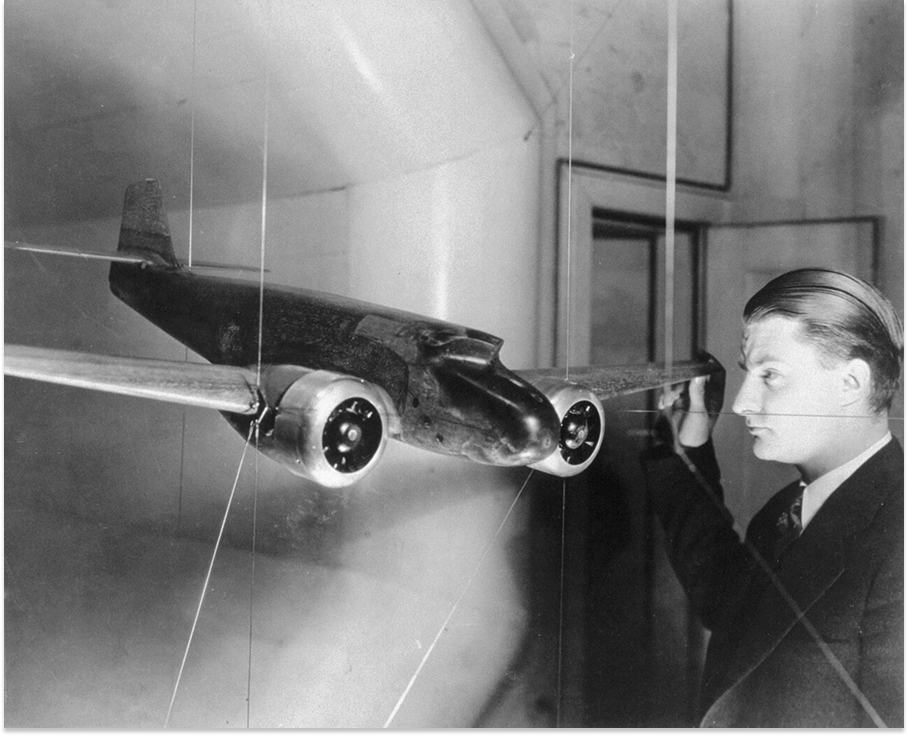

There’s this great story in Skunk Works, Ben Rich’s personal memoir from his time atop the famed Lockheed division, about how the F-117 stealth bomber came to be.

A thirty-six-year-old mathematician and radar specialist named Denys Overholser happened to read a translation of a dense technical paper by Pyotr Ufimtsev, the chief scientist at the Moscow Institute of Radio Engineering, titled Method of Edge Waves in the Physical Theory of Diffraction. Ufimtsev had “revisited a century-old set of formulas derived by Scottish physicist James Clerk Maxwell… these calculations predicted the manner in which a given geometric configuration would reflect electromagnetic radiation” and took them a step further. “Ben,” Overholser told Ben Rich, “this guy has shown us how to accurately calculate radar cross sections across the surface of the wing and at the edge of the wing, and put together these calculations for an accurate total.”

With Ufimtsev’s work, the Skunk Works team could create computer software to calculate the radar cross section (how visible an object is to radar) as long as the shapes were in two dimensions. If they designed the bomber as thousands of flat triangles, they could add them all up and get the radar cross section.

That’s exactly what Overholser did, and the design that emerged “was a diamond beveled in four directions, creating in essence four triangles,” which, viewed from above, “closely resembled an Indian Arrowhead.”

He called it the Hopeless Diamond, and calculated that it would be “one thousand times less visible than the least visible shape previously produced at the Skunk Works.” On a radar screen, it would appear to be the size of an eagle’s eyeball.

Kelly Johnson is the Skunk Works founder and boss who was so magnificent at airplane design that his boss said of him, “That damn Swede can actually see air.”

He was so unimpressed by the design that, when he saw it, he physically kicked Rich in the butt, crumpled the proposal, threw it at Rich’s feet, and stormed, “Ben Rich, you dumb shit. Have you lost your goddamned mind? This crap will never get off the ground.”

As it turned out, the model was right and even the great Kelly Johnson was wrong. The Hopeless Diamond became the F-117 Nighthawk, the stealthiest plane built to date by more than three orders of magnitude, and flew over 1,300 sorties during the 1991 Gulf War without a single combat loss.

The F-117’s was an alien geometry, and it gave the US otherworldly capabilities.

We’re attempting to do something similar, but at silicon scale (to start). And with AI doing the search instead of human engineers sketching on whiteboards, we’re planning to do it over much wider problem spaces and search spaces than currently exist.

The Shape of Things to Come

We have a lot of work ahead of us to solve the core problem: building a Large Field Model that can do for EM what LLMs did for language.

The simulation has to get faster. The generative model has to get smarter. The fabrication loop has to tighten, and we need to actually fab analog silicon, and then we need to do it for a lot of customers. We need to hire more of those rare humans who think like electrons and work with them to create training data.

It’s hard to see what our models can do today and not imagine what they might be able to do in the future, though.

If it scales across the entire EM spectrum like we think it will, things will get very interesting.

How interesting? Packy asked me whether our models might one day help finally produce a Grand Unified Theory, if one is possible.

I don’t know. But here’s how I think about discovering new physics more broadly.

All of our tools today — since the dawn of computers — have been about deduction. Solve, compute, calculate, predict. Here’s an input; tell me what happens. But a lot of really creative human reasoning is inductive. You postulate a question or an idea, and then you research it. That’s deeply creative and deeply human.

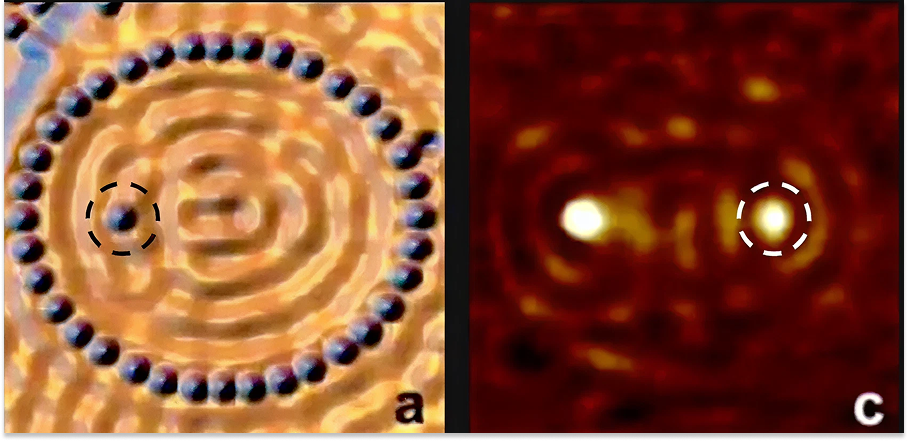

This connects to something personal for me. My undergraduate research advisor at Stanford, Hari Manoharan, did an experiment that made the cover of Nature in 2000.

He arranged 80 cobalt atoms in an ellipse on a copper surface. You know those whispering galleries where you stand in a corner and whisper and someone on the other side can hear you? That’s constructive interference of sound waves. Hari knew that electrons also behave like waves, and he suspected they should interfere in the same way.

And that’s exactly what he saw. A “quantum mirage.” A ghost atom appearing at one focus when a real atom sat at the other. What blew everyone’s mind was that there was no time delay. Physics predicted some tiny delay to account for information traveling at the speed of light. Instead, it was instantaneous. This kicked off a whole field of quantum communication research.

Hari knew the Schrödinger equation. He knew Maxwell’s equations. Everybody knew those equations. But he combined them in a way no one had thought to try, postulated what might happen, and built an experiment to test it. That’s how new physics happens.

How amazing would it be if we had machines that could actually understand these equations and help us? Currently, we have to wait decades for a genius to come along and push a field forward. What could we benefit from if those geniuses had a little help? How much closer could we pull the future? How much more of the universe could we understand in our lifetimes?

What if our foundation model could start making those leaps? Right now, it’s not yet breakthrough inductive, meaning it can’t create its own experiments. It’s like an applied physicist: we can tell it our engineering goal and it will come up with a design. But as it learns more, develops something like intuition, could the model start postulating? Could it notice patterns humans have missed and suggest experiments?

And maybe (this is where I let myself dream), maybe if we build foundation models for each of the fundamental forces, and they start talking to each other, we get closer to something bigger. There are four fundamental forces: strong, weak, gravity, electromagnetism. We’re just trying to make a dent in one of them. But physics is deeply interconnected. Hari’s quantum mirage happened because electromagnetism and quantum mechanics intersected in a way that nobody expected.

What happens when foundation models that understand multiple forces chain together, exploring the spaces between them? I can’t stop thinking about this.

Ultimately, that’s what this is about. It’s why I pursued my PhD in quantum electromagneticism for four years (then dropped out in true Silicon Valley fashion) and why I started Arena Physica.

I want to understand the nature of reality in order to manipulate it for the betterment of humanity. To do that, we need models that learn directly from physics, that develop intuitions we’ve never evolved to have.

One of the questions that came up in grad school, and I remember thinking, how do people even ask these questions, was: Why does physics work? Why does math work? Isn’t it strange that reality is so... describable? Why isn’t it much more random?

Maybe these models will help us find out.

Electromagnetism secretly runs the world. We’ve been manipulating it for a century with our hands tied behind our backs, limited by the rarity of humans who can see what we cannot see.

We’re creating something to understand the interplay between geometries and electromagnetic waves, and evolving them to develop a new intuition.

The universe is made of fields. Fields are shaped by geometry. Geometry, it turns out, is something computers can learn much better than we can. We should lean on them so that we can get to new problems.

If we learn to shape the waves, we might be able to shape the future.

Big thanks to Pratap and the whole Arena Physica team for sharing their knowledge, and to Badal for the cover art.

That’s all for today. We’ll be back in your inbox with a Weekly Dose on Friday.

Thanks for reading,

Packy

Ten is the number that keeps coming up in conversations with people at companies where these people work today. The real number of world-class RF designers is probably in the low hundreds. They all seem to know each other by first name. The community is that small.

The programming term “bug” comes from bugs crawling into vacuum tubes.

Tuxedo Park : A Wall Street Tycoon and the Secret Palace of Science That Changed the Course of World War II by Jennet Conant is a great book for those interested in learning more.

Read more of the ARM story in The Electric Slide (link right to ARM section)

For those who want the technical details, we will be releasing a technical blog post next week.

The metric is MAE, but the value we’re measuring here is an S-parameter (Scattering parameter), it’s a complex number, so there is a real and imaginary part... that’s why we separate out phase and magnitude.

🧠🔥 - again, Packy. - Love this part: "Maybe if we build foundation models for each of the fundamental forces, and they start talking to each other, we get closer to something bigger. There are four fundamental forces: strong, weak, gravity, electromagnetism. We’re just trying to make a dent in one of them. But physics is deeply interconnected." That gets my tail wagging.

Love it! Worth the subscription on its own! keep them coming (with audio)